Quickplay – From Disrupted to Disruptor: How Broadcasters Win the Creator Era

Andre Christensen, Co-Founder and CEO, Quickplay

Don’t be fooled. We are in the midst of the biggest opportunity ever seen for broadcasting.

You’re thinking, “how’s that possible when 2025 marks the year when…”

- …more than half of Americans prefer news via social media.

- …streaming has surpassed linear in total viewing hours.

- …the creator economy is pulling in more ad revenue than traditional media.

Doesn’t that define a decline, not an opportunity?

Only if you dig your heels into the old ways and resist adopting a multiplatform, creator-native business model.

This article will outline tips for creating value with new approaches to distribution, measuring success and monetization.

The Creator Economy Has Renamed the Game

Broadcasters are scrambling to adapt to the powerful creator economy and are frazzled by how quickly these newcomers have rewritten the entire monetization playbook. The likes of MrBeast and Nebula were born as integrated media businesses that seize monetization opportunities beyond the subscription and ad revenues broadcasters traditionally rely on.

This new business model doesn’t put broadcasters at a disadvantage. In fact, their massive content libraries, brand equity, and production expertise are massive advantages that creators can’t easily replicate. These attributes are unmatched by modern day creators, and are institutional assurances for viewers, whether they realize it or not.

Resist Legacy Thinking: Platforms Multiply Your Audience, Not Threaten It

Millennials, Gen Z and Gen Alpha represent ~70% of the global population and are more engaged, more monetizable, and more reachable than any prior generation.

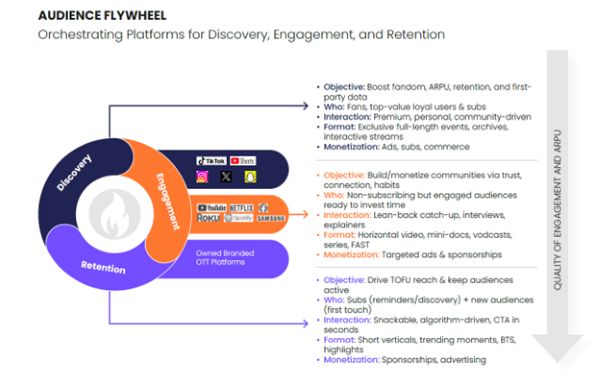

Many broadcasters focus on viewer erosion and concerns over churn. While it’s true viewers are watching content outside of your owned platforms, that doesn’t mean your audience is smaller. It’s quite the opposite: it’s multiplied. It’s just more spread out than you’re used to.

Here are three important myths to debunk immediately:

- GenZ and Millennials are not willing to pay for content. Whilst they might not sign up for the exact same offerings as older generations, they actually outspend them by 1.5-3x on digital subscriptions, content, and eCommerce. This represents a huge upside potential for broadcasters willing to broaden their approach to monetization in ways aligned with the habits and preferences of these audiences.

- Gen Z only watches user-generated content. They consume both professional and social content. They spend more time watching video overall than older generations, it’s just distributed differently across platforms. They are as likely to watch produced TV content as they are non-TV content (e.g., UGC, gaming live streams, shorts on social). Nearly two-thirds of the global online population consumes short-form video content on TikTok, YouTube Shorts, Instagram Reels, and other platforms each day, compared to only 47% who watch broadcast TV channels and 46% who watch streaming services, according to Ampere Analysis.

- Viewer cannibalization is real. There are only upsides to embracing third-parties within your strategy. For your most loyal viewers, reaching them where they are is an effective flywheel to lead them back to your own branded platforms. And for those who might not visit your properties at all, you’ll reach them in their preferred environment with content that could entice them to explore your libraries further, driving deeper engagement and higher–value monetization.

Tap Into AI to Accelerate your Creator-led Mindset

AI enables traditional broadcasters to operate as agile content creators at scale. Broadcasters’ traditional one-size-fits-all approach has become outdated – viewers are used to hyper personalization. There’s too much content out there for a viewer to settle for blanket recommendations when they can find exactly what they want elsewhere with less effort.

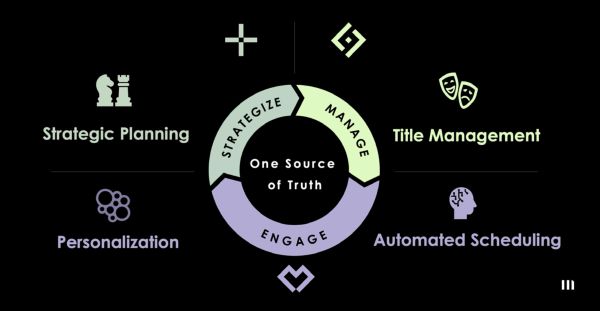

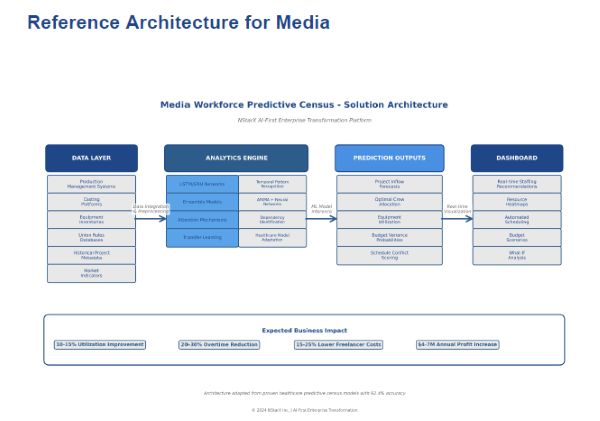

AI allows for deeper awareness of viewer preferences, their habits, and demographics combined with the ability to tailor and distribute personalized content at scale . Consider these capabilities:

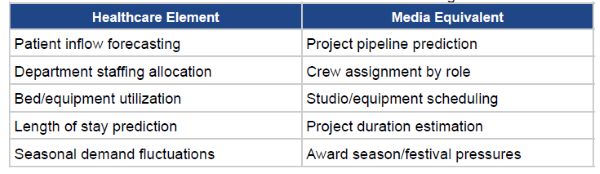

- Automating content-to-audience flow across linear, OTT and social media. AI makes it so broadcasters can compete with the publishing velocity of the creator economy while maintaining the production quality and editorial standards that distinguish its content.

- Dynamically converting one story and content piece into multiple formats, customized for viewers and their preferred viewing platform

- Transforming vast content libraries that may be lying dormant into growth and engagement engines and leveraging short-form creator tools as the front porch to these longer-form pieces.

- Making relevant, personalized discovery a reality and a new route to monetization

What’s Ahead

Broadcasters aren’t condemned to become commoditized content suppliers feeding the global streaming machine. The assets they have—trusted brands, professional content, local relevance, live sports and news—remain highly valuable. But those advantages can perish if new strategies aren’t considered. The broadcasters that will thrive are the ones that transform from program schedulers to platform orchestrators.

To win as the perceived underdog, view the disruption we all anticipated as your accelerant to becoming the disruptor:

- Meet your viewers where they are. Gone are the days where your own branded properties are the only places your content resides. Embrace new distribution models and third-party platforms, not for syndication, but as a part of your ecosystem. Allow AI to provide the agility and speed expected today while maintaining the trust and standards your legacy embodies.

- Relevance outperforms reach now. Success is measured by deeper impacts not broader touches. Viewers today embrace community, and your success must pivot to the same, shifting away from reliance on passive viewers.

- Engagement must go beyond viewing to include commerce with a single data loop – from unified ads, subscriptions, bundling, and transactions – outputting insights to inform growth and value-add strategies across every screen.

Think fast – or you’ll miss the biggest opportunity broadcasters have ever seen.